The Myth of Aviation Perfection

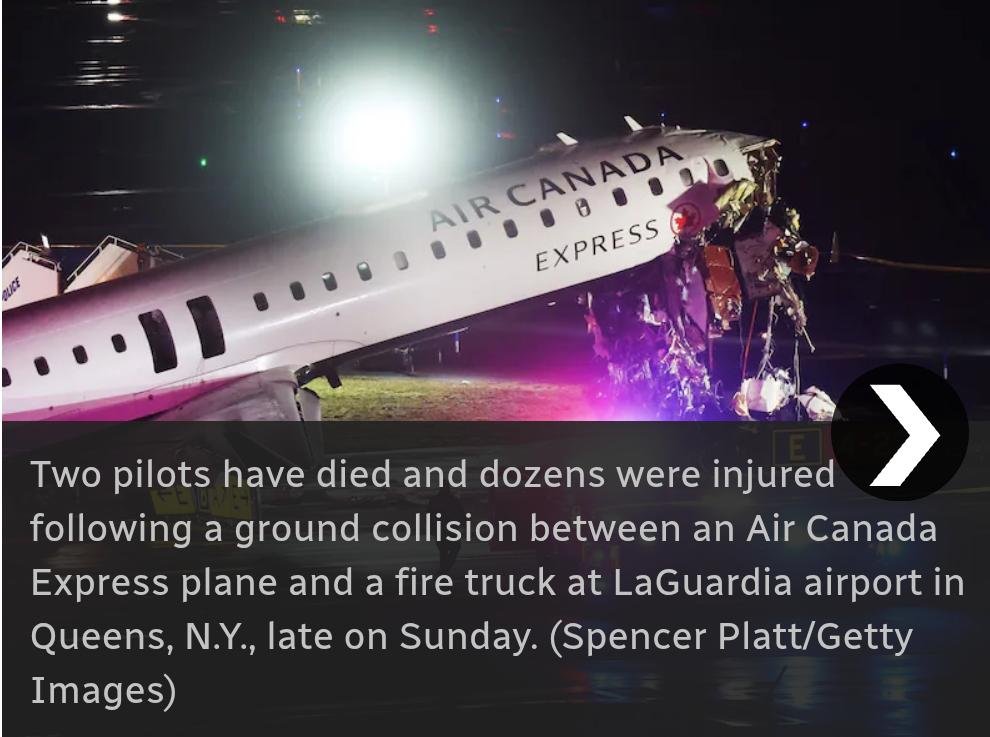

Modern aviation sells an illusion of near-perfection—layers of automation, strict protocols, and highly trained professionals. Yet the tragic 2026 collision at LaGuardia Airport shattered that illusion in seconds. A routine landing turned fatal when an aircraft struck a fire truck on an active runway, killing two pilots and injuring dozens.

This was not a catastrophic systems failure in the traditional sense. It was something far more unsettling: a chain of small, human-scale breakdowns. And that is precisely why Canada—or any country with advanced aviation systems—is not immune.

“It Only Takes One”: The Dangerous Simplicity of Runway Incursions

At its core, the LaGuardia disaster was a runway incursion—a scenario where an aircraft, vehicle, or person is incorrectly present on a runway.

That definition sounds technical. The reality is brutally simple:

one wrong clearance, one misunderstood instruction, or one delayed reaction—and metal meets metal.

Investigators have already pointed to multiple contributing factors:

A fire truck crossing the runway during landing

Failure of a key alert system due to missing transponder data

Heavy controller workload and understaffing

None of these factors alone guarantee disaster. But together, they create a fragile system where a single slip becomes fatal.

Human Factors: The Weakest Link in a Strong System

Aviation systems are designed with redundancy—but humans remain the final decision-makers. And humans, unlike machines, are vulnerable to fatigue, stress, and overload.

On the night of the LaGuardia collision, one controller was reportedly managing both ground and air traffic during a surge in flights—far beyond normal workload.

This is not uniquely American. Canadian airports—especially major hubs like Toronto Pearson or Vancouver—experience similar traffic peaks, weather disruptions, and staffing constraints.

History shows that even experienced professionals make critical errors under pressure. The 2007 San Francisco near-collision, one of the most serious runway incursions ever recorded, was attributed not to incompetence but to a momentary lapse by a seasoned controller.

The uncomfortable truth:

Expertise reduces risk—but never eliminates it.

Technology Isn’t a Safety Net—It’s a Safety Illusion

One of the most alarming revelations from LaGuardia is that a sophisticated runway warning system failed to prevent the crash—because it lacked complete data.

This exposes a critical flaw in modern aviation thinking:

We assume technology will catch human mistakes. But technology itself has blind spots.

Systems depend on proper equipment (like transponders)

Alerts can be downgraded or ignored

Complex environments can confuse automated tracking

In other words, technology doesn’t eliminate risk—it redistributes it.

Canada’s aviation infrastructure is advanced, but it operates on the same principles. A missing signal, a misconfigured alert, or an untracked vehicle could create identical vulnerabilities.

The Canadian Comfort Trap

Canada is often perceived as having a safer, more conservative aviation culture. And statistically, that may be true. But safety breeds complacency.

The LaGuardia crash reveals a deeper issue:

Risk in aviation is not about geography—it’s about system complexity.

Busy airports share common characteristics:

High traffic density

Mixed aircraft and ground vehicle operations

Real-time decision-making under pressure

Whether in New York, Toronto, or Montreal, the equation is the same.

The danger lies in believing, “It can’t happen here.”

Because that belief is often the first step toward making it happen.

A System Designed to Fail—Eventually

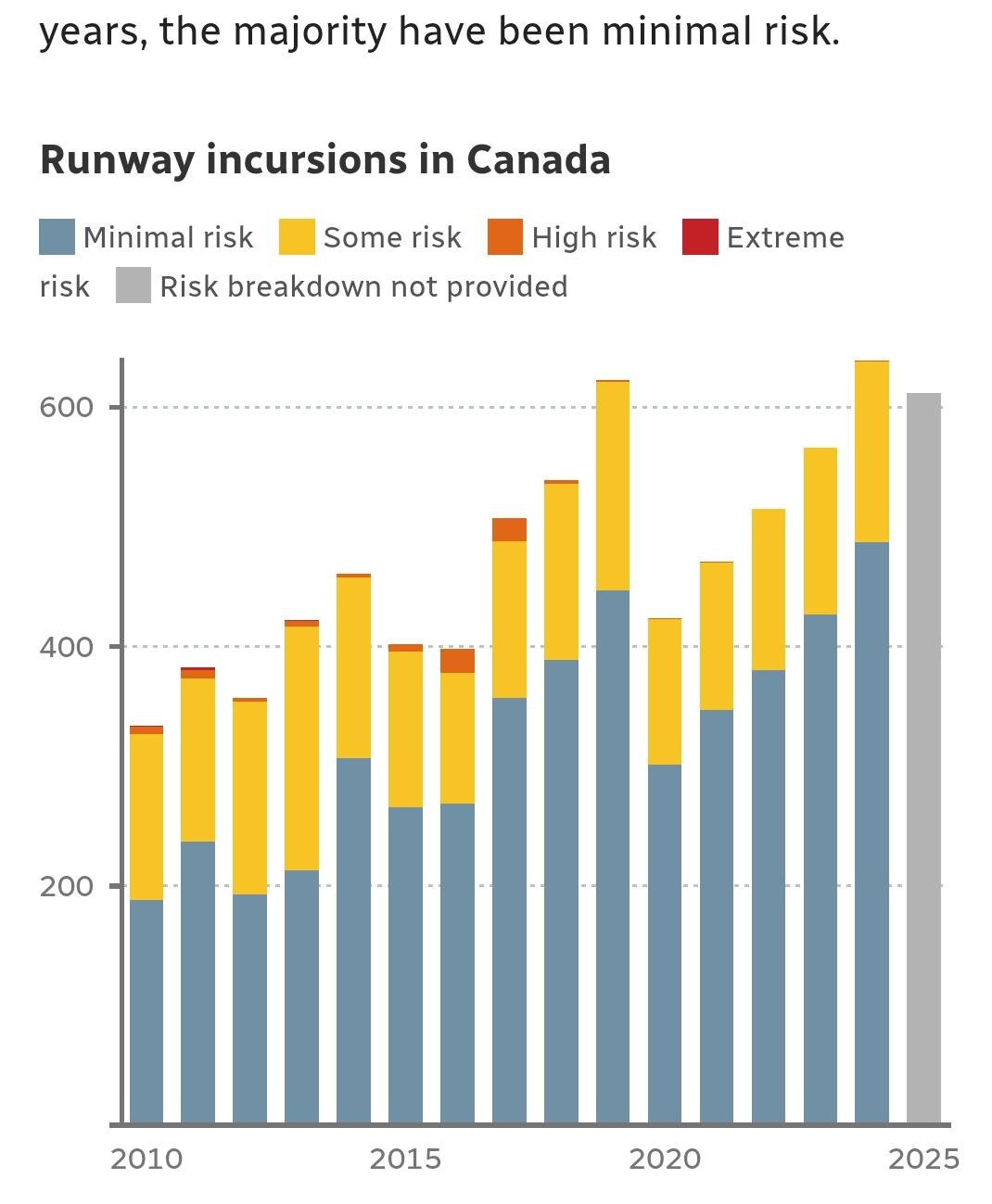

Runway incursions are not rare anomalies. They occur regularly, often without consequence—until one day, they do. FAA data shows dozens of such incidents monthly, most resolved before disaster strikes.

This creates a paradox:

The system appears safe because failures are usually invisible.

But every near-miss is a warning.

Every corrected mistake is a glimpse of what could have gone wrong.

The LaGuardia crash was not an outlier—it was an outcome.

What Must Change: From Prevention to Anticipation

If there is one lesson Canada—and the global aviation industry—must take from LaGuardia, it is this:

Safety cannot rely on perfection. It must assume failure.

That means:

Designing systems that function even when humans err

Mandating full equipment integration (no “optional” safety tech)

Reducing controller workload during peak periods

Creating fail-safes that don’t depend on a single point of judgment

Most importantly, it requires a cultural shift:

From reacting to incidents → to anticipating inevitabilities.

Conclusion: The Illusion of “Just One”

“It only takes one” is often used as a warning. But in aviation, it’s misleading.

Because it never really takes just one.

It takes:

One missed signal

One overloaded controller

One flawed assumption

One moment of hesitation

And then, suddenly, everything aligns in the worst possible way.

LaGuardia was not a freak accident.

It was a reminder.

And unless systems evolve beyond human limits—not just accommodate .